Contents

By the time the team contacted us, their booking platform was already operating on a system of dozens of microservices in production. Their setup was based on Docker Swarm, which had supported the platform for years… For years, but not anymore.

As the number of services grew past 70 (already a fairly large setup for Docker Swarm), the system became harder to manage, keep stable, and scale. What was needed was a Docker Swarm replacement. It was required to be replaced with Kubernetes, but in a way that wouldn’t affect customers or interrupt the platform. This became the starting point for the collaboration with us at IT Outposts.

Meet the Client and Their Mission

Our client builds software that helps companies make crew travel easier to manage and less time-consuming. The goal of the platform is to allow customers to search, book, and update trips themselves in one place.

Over time, the platform grew into a system of more than 70 microservices supporting different parts of the booking process. And as the company prepared for further growth (with the goal of growing 2.5 times), the team needed an infrastructure that could stay reliable. It was critical since customers expect bookings and changes to work immediately, no matter the time of day. Thus, even small disruptions can affect real travel plans.

At the same time, the infrastructure must scale with the product and simply be easy to manage.

The need for the system’s reliability and scalability eventually led to the decision to modernize the platform and rethink how it runs behind the scenes.

The Infrastructure We Started With

As the product evolved, the infrastructure became a mix of services, scripts, and configurations that became harder to manage. For instance, to investigate issues, the team often had to switch between different tools and logs, and it was not always clear how one service affected another.

At the same time, the infrastructure was split between two cloud providers. AWS hosted the main production workloads across multiple accounts, while Hetzner supported selected systems where lower costs made more sense than full AWS-level redundancy. It helped reduce infrastructure costs, but it also added complexity, particularly around networking and service communication.

In addition, as mentioned earlier, the platform had to stay online 24/7, and this remained a key requirement throughout the project. However, the transition to Kubernetes still had to happen, and since the Docker Swarm cluster hosted several business-critical services, we needed to find a way to move the services without interrupting the platform—any disruption could directly affect ongoing bookings.

So, while Kubernetes was the logical next step, introducing it into a live production system had to be done carefully.

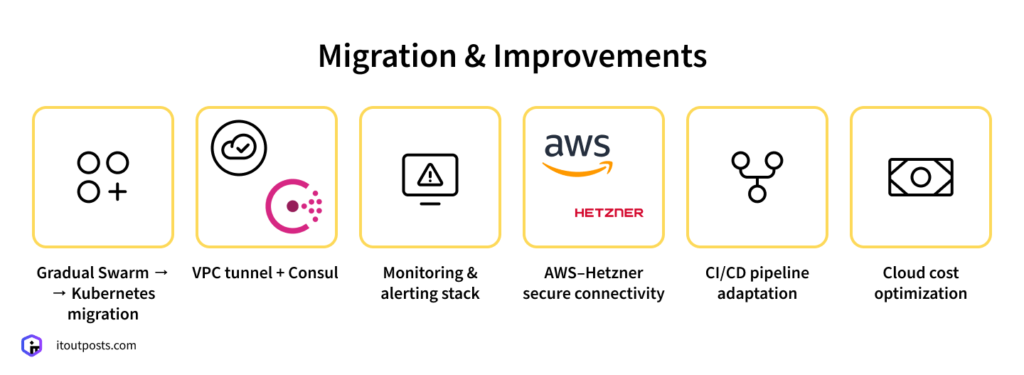

Work Done During the Project

Our team planned a gradual transition where Docker Swarm and Kubernetes would run side by side during the migration.

Migration Planning

We began the migration with non-critical services that had minimal dependencies. This helped us understand how services behaved in Kubernetes under real production conditions and improve the process without risking the core booking flows.

Only after that did we turn to the foundational systems—Redis, MongoDB, and RabbitMQ. Because so many services rely on them, we first mapped out dependencies to understand exactly how data and messages flowed through the platform. Once everything was clear, we transitioned these components.

Making Swarm and Kubernetes Work Together

During the transition, Docker Swarm and Kubernetes ran side by side for some time. Some services were already in Kubernetes, while others still lived in Swarm, and they had to communicate without issues.

To make that possible, we connected the two environments through a VPC tunnel. On top of that, our engineers introduced Consul as a shared service discovery tool. Any service can now use Consul to find the services it depends on, regardless of which environment they’re running in.

Making the Platform Easier to Understand and Operate

As the platform expanded, it became more difficult to understand how the system was performing overall. With many services involved in a single booking flow, small problems could slip by until users began to feel the impact. To improve this, we introduced basic Site Reliability Engineering (SRE) practices.

To improve visibility, we set up Prometheus, Grafana, and Alertmanager, making it easier to see how the system is behaving on a daily basis. Instead of tracking only technical metrics, we focused on what actually affects customers, like response times and error rates.

Services were grouped into tiers based on how much they impact users and revenue. For each tier, we defined service-level objectives (SLOs) and service-level agreements (SLAs), which helped clarify what “healthy” looks like and when action is needed.

Our team also introduced multiple alerting channels so issues reach the right specialists faster. App admins take care of code-related problems, while infrastructure alerts go directly to the ops engineers.

Introducing Monitoring That Works Even During Failures

Another concern we needed to address was what would happen if the main Kubernetes cluster went down. If monitoring tools were running inside the same cluster, they could fail at the same time, leaving the team without visibility at the worst possible moment.

To avoid that situation, our team set up a separate management cluster with its own Prometheus and Grafana instances. This monitoring environment mirrors the main one and continues collecting health data independently.

Connecting the Hybrid Cloud Environment

The client’s infrastructure is based on both AWS and Hetzner, so reliable and secure connectivity between environments was essential.

We set up an IPsec tunnel between AWS and Hetzner, allowing services in both clouds to communicate over private IP addresses instead of public networks. This made the environment more secure and predictable.

Within AWS, we also configured VPC peering so services running in different accounts could communicate.

Keeping Costs Under Control

Cost is always part of the discussion on each of the projects we manage, so we looked for ways to reduce expenses without putting reliability at risk.

One of the cloud cost-optimization solutions was already in place before we started—the client was using a hybrid cloud model. Critical workloads run in AWS, while other systems are hosted on Hetzner, where infrastructure is significantly cheaper. What we assisted with was improving connectivity between AWS and Hetzner, and this task was already marked “done.”

However, there were many more ways we could help reduce operational expenses. For instance, monitoring.

Since monitoring systems generate large amounts of data, we introduced a metrics retention policy where most metrics are stored for 30 days (up to 60 days for selected cases). In practice, a month of metrics is enough to understand problems and track trends, so storing more data would only increase costs.

Defining SLOs and SLAs also helps manage costs in the long run. By tying system availability to business impact, our client can better see which services are most critical and address issues before they lead to expensive downtime.

Preserving Familiar Developer Workflows

While we were changing the infrastructure, developers still had to work as they always did; The transition to Kubernetes couldn’t get in the way of regular releases.

For this reason, we adapted the CI/CD pipelines to keep the process familiar so that developers could continue building, testing, and committing code just as before—when code is committed to the main Git repository, it’s simply automatically deployed to either Docker Swarm or Kubernetes, depending on where the service runs.

Planning Your Next Infrastructure Step?

Many products reach a point where the existing setup starts to be limiting. Kubernetes can often be the right next step. But not always, and not in the same way for every project. The choice depends on your system, your goals, and the way your team works.

If you’re thinking about Kubernetes or simply want to improve your current infrastructure, we can go through your setup together and identify which solution makes the most sense.

At IT Outposts, we help teams find the right balance between scalability, reliability, and cost efficiency, identifying places where costs can be optimized, so our clients don’t spend money where it isn’t necessary.

If you’d like to discuss your project, reach out to our team. We’ll take a look and share our expert perspective.

I am an IT professional with over 10 years of experience. My career trajectory is closely tied to strategic business development, sales expansion, and the structuring of marketing strategies.

Throughout my journey, I have successfully executed and applied numerous strategic approaches that have driven business growth and fortified competitive positions. An integral part of my experience lies in effective business process management, which, in turn, facilitated the adept coordination of cross-functional teams and the attainment of remarkable outcomes.

I take pride in my contributions to the IT sector’s advancement and look forward to exchanging experiences and ideas with professionals who share my passion for innovation and success.