Contents

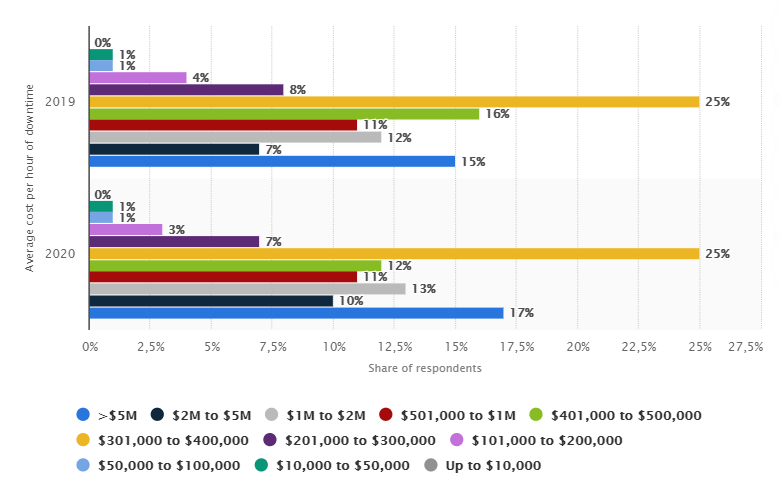

According to statistics, in 2020, 25% of the surveyed respondents reported that the average cost of downtime servers ranged from 301,000 to 400,000 thousand US dollars. These are colossal numbers, especially when it comes to losses.

Let’s find out what the term “downtime” means, how to calculate the average cost of downtime, and get IT infrastructure support to protect your business from unexpected losses.

What is Downtime and How to Prevent Them?

Downtime is the period of time when a business stops operating. Downtime can exist in any enterprise, because office equipment and software, as a rule, require regular maintenance. But unplanned downtime is a real threat to companies if you don’t know how to eliminate or minimize them. There are several reasons for downtime:

- network error;

- power off;

- malware;

- hardware failure;

- natural disasters.

All of these reasons can affect the company in different ways, depending on how many business processes have been knocked down.

For example, in most cases the one computer infected with malware causes minimal damage. But if it spreads to the entire network, the losses seriously rise. To avoid this, companies usually use a backup system that allows employees to continue their work while troubleshooting.

Companies also try to minimize staff unavailability by cross-training employees. That way any employee can perform the tasks of his or her colleague when he or she is not in the workplace. This is how enterprises ensure their continuous work, regardless of any force majeure.

For now, let’s find out how to calculate the cost of downtime.

What comes under the cost of downtime?

An efficient calculation of the real cost of downtime is as follows:

revenue/working days per year/working hours.

For example, if your company earns 5 million a year and works on average 23 days a month for about 12 hours a day, then the calculation of the cost of downtime will look like this:

5 million / 276 business days / 12 hours = $ 1,510 lost for every hour of your company’s downtime.

When you calculate the costs of downtime, you can analyze the impact on your budget and assess how much cost your business can sustain. Further, you can plan your disaster recovery program, which ensures that disasters don’t lead to prolonged downtime or data loss beyond your calculations.

Cost of Application Downtime

Application downtime costs can be direct and indirect. Direct costs are associated with the production of goods, functions, or services. Indirect costs are more difficult to measure, but they can bring more losses to your company. There are different categories of indirect costs such as:

- business expenses. These are costs associated with salaries, repairs, loss of inventory, or other losses incurred during downtime;

- restoration costs. These costs are based on the cost of system downtime and IT staff overtime during recovery;

- loss of customers. Customers may prefer other companies if they consider yours unreliable;

- damage to reputation. Bad advertising can also harm your company. This means that negative online reviews of your company make a bad impression of your brand.

Cost of website downtime

Many reasons can affect the cost of your website downtime. One of the factors is the size of your company, but that’s not all. It’s also important whether your business can process orders when the website is down. For instances that operate exclusively on the Internet, website downtime can cause significant losses.

Another factor that determines the cost of downtime is your website’s sales. If you earn an average of $ 2,000 per hour, a minute of website downtime will cost you $ 33. In case your website stops working during rush hour, these numbers can increase many times over.

In such cases, website monitoring services come to the rescue. With such services, you will protect yourself from unforeseen crashes in order to avoid unnecessary costs.

Cost of zero downtime calculator

If you want to calculate the cost of downtime in your specific case as accurately as possible, you can use one of the following calculators.

Cost of cloud downtime

Cloud providers can’t promise you zero downtime, but there are procedures to help you minimize it. To reduce the cost of cloud server downtime by yourself, you should develop a proactive downtime policy.

In the case of cloud management, you need to be comprehensively proactive. Talk to your IT team about strategies to mitigate the impact of cloud downtime, automate downtime detection processes, and develop a plan for work resumption. It’s also worth backing up the data regularly to avoid its loss. Thus, you are prepared for downtime, which means it minimizes the losses of your business.

Read more about:

How to Choose DevOps as a Service Provider

Top Cloud Migration Challenges

How to minimize the average cost of network downtime?

A network failure can freeze all work processes – from sales to management. This can cause your company to lose profits, customers, and productivity. Furthermore, many small businesses can get completely out of order.

The solution to these problems is load balancing using SD-WAN, which automatically loads balanced packets across multiple links at each website, adapting the bandwidth to achieve high levels of network performance. These are the main features of packet load balancing:

- monitors the quality of the chain in the tunnel connecting the website to the network;

- ensures that packets are sent over the channels with maximum efficiency at all times;

- removes inefficient circuits to provide better performance and uptime;

- dynamically adds schemas to the tunnel as performance thresholds come back to normal.

What else affects the average cost of server downtime?

To calculate the cost of server downtime in an enterprise, it is important to take into account such parameters as:

- number of employees;

- number of administrators;

- average working week (working hours per day and working days per week);

- the company’s annual gross income;

- hourly wages of the administrator;

- hourly wages of an employee.

It is necessary to take into account the planned outages of the server:

- every-month blackouts;

- the average duration of outages;

- the number of users disconnected at the same time;

- the number of administrators involved in this process.

One-stop Solution to Minimize Downtime – DevOps

Implementing DevOps is the perfect way to minimize the amount and duration of downtime, both planned and unplanned.

If you want to implement DevOps processes, you are better off investing in skills and tools like Terraform, Kubernetes, Docker, Ansible, Jenkins, ELK, Prometheus & Grafana, and many more. By the way, most DevOps tools are open source, which means there are huge savings in the long run, even though the initial transition can be quite challenging.

Thus, Terraform and Ansible are IaC (Infrastructure as Code) tools that allow reusing environments (i.e., deploying any number of environments in a minimum of time) and organizing a reliable disaster recovery plan without extra infrastructure costs.

Docker and Kubernetes help handle and optimize microservices – if an application’s microservices are containerized separately, one microservice failure doesn’t affect the performance of others. Kubernetes enables one to flexibly scale the infrastructure based on the direct application needs and minimize at least high-load failures.

Jenkins is one of the multiple CI tools that helps automate deployment processes (integrations of application parts) and, hence, minimize manual interactions and reduce the risks of errors and failures.

Prometheus & Grafana is among the most widely used open-source monitoring tools, which allows, apart from tracking and adjusting infrastructure’s system metrics, to easily configure business indicators, track the performance of each microservice as well as of the whole project, and predict failures (or be prompt at indicating error causes).

ELK (Elasticsearch + Logstash + Kibana) or EFK (Elasticsearch + Fluentd + Kibana) are a set of logging infrastructure tools that enable a flexible analysis of the application’s state and of internal incidents.

Apart from these mentioned capacities, there are tons of other tools for optimizing the availability of your project. The main pro tip here would be to try them all, but entrust their maintenance only to profiled professionals.

When all IT operations are automated, you can easily deploy a machine learning model to perform predictive analytics. Such a model determines the optimal working schemes and controls your systems. If the first signs of a possible failure appear, it immediately activates one of several possible countermeasure scenarios and reduces the consequences of downtime in case it occurs.

Also read: Migrating From AWS to Azure and AWS vs. Azure

Conclusion

In this article, we explained to you what downtime is, how to calculate it, and how to deal with its consequences. In particular, downtime can cause huge losses for your company, so it’s worth considering solutions to prevent it or quickly eliminate the consequences of downtime right now.

We at IT Outposts have an impressive experience of working with DevOps practices, so if you want to prevent downtime, our best specialists are always ready to help you with this!

I am an IT professional with over 10 years of experience. My career trajectory is closely tied to strategic business development, sales expansion, and the structuring of marketing strategies.

Throughout my journey, I have successfully executed and applied numerous strategic approaches that have driven business growth and fortified competitive positions. An integral part of my experience lies in effective business process management, which, in turn, facilitated the adept coordination of cross-functional teams and the attainment of remarkable outcomes.

I take pride in my contributions to the IT sector’s advancement and look forward to exchanging experiences and ideas with professionals who share my passion for innovation and success.